Servers

Servers

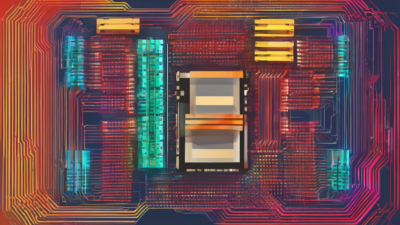

Best Dedicated Servers for High Traffic Sites Guide

Best Dedicated Servers for High Traffic Sites provide the raw computational power, bandwidth, and reliability that high-traffic websites demand. This comprehensive guide explores what makes a dedicated server suitable for high-traffic environments, key specifications to prioritize, and top providers delivering exceptional performance in 2026.

Read Article