Servers

Servers

Scale Llama 3 Triton Multi-GPU Setup Guide

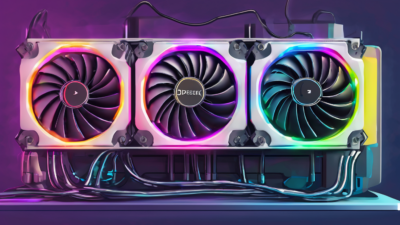

Scale Llama 3 Triton Multi-GPU Setup unlocks massive inference speed for AI workloads in Dubai's hot climate. This guide covers Docker deployment, Triton configs, and regional tweaks for UAE enterprises. Achieve 8x throughput with H100 clusters.

Read Article